Most people use the phrase "AI agent social media automation" to mean something much broader than generating captions.

What they usually want is this:

- an agent that can help plan or draft content

- a reliable way to connect real customer-owned social accounts

- a workflow that can upload media, validate payloads, and publish without guessing

- a way to inspect what happened after the publish step runs

That is the important split. The agent can handle reasoning. The publishing layer should handle execution.

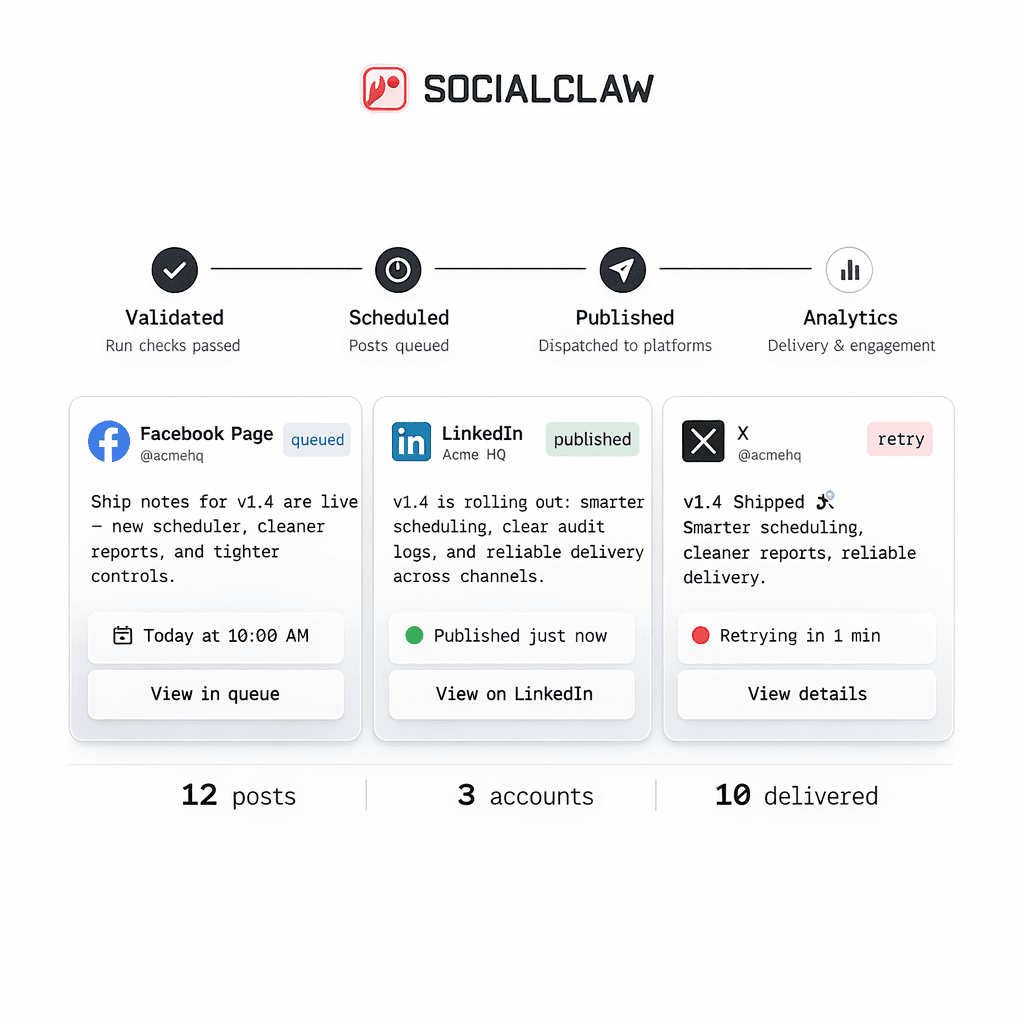

SocialClaw fits that second role. It acts as the system of record for connected customer social accounts, hosted media, schedule validation, publish execution, and delivery-state inspection. Instead of asking an agent to own every provider auth flow and every media rule directly, you give it one stable workspace to publish through.

The problem with naive AI-agent posting

It is easy to demo an agent that writes a social post. It is much harder to run that workflow safely in production.

The failure points are predictable:

- the agent drafts a post for the wrong account type

- the target provider requires media, but the payload is text-only

- a post shape is valid on one provider and invalid on another

- the workflow relies on third-party file URLs that do not survive long enough to publish

- a retry runs without clear status, so operators cannot tell whether the post failed, duplicated, or partially completed

This is why "just let the agent post directly" usually breaks down.

The more reliable mental model is:

- the agent decides what to publish

- the publishing layer decides how that publish should actually execute

The right architecture: agent plus publishing control plane

The clean production setup is simple.

The agent owns:

- research

- copy drafting

- schedule proposals

- payload assembly

- channel-level decisions

SocialClaw owns:

- connected account storage inside the workspace

- workspace API key auth for CLI and API execution

- hosted media upload and reusable public asset URLs

- validation before apply

- publish execution

- run, post, analytics, usage, and health inspection after publish

That split matters because social publishing is not just one API call. It is account connection, capability discovery, media handoff, validation, execution, and inspection.

The minimum workflow that actually works

In the current SocialClaw model, the human operator or end user starts in the dashboard, signs in with Google, connects accounts once, and creates or copies a workspace API key. After that, an agent or backend job can work against the same hosted workspace.

A simple CLI flow looks like this:

socialclaw login

socialclaw accounts list --json

socialclaw accounts capabilities --account-id <account-id> --json

socialclaw assets upload --file ./launch.png --json

socialclaw validate -f schedule.json --json

socialclaw apply -f schedule.json --json

socialclaw status --run-id <run-id> --jsonThe key idea is that the agent does not need to reconnect accounts every time it publishes. The workspace already holds those connections.

That is especially useful for agent-driven workflows because the same workspace can be reused through:

- the dashboard

- the HTTP API

- the CLI

- OpenClaw-compatible tools

Why "connect once, reuse everywhere" matters

The most fragile AI-agent demos tend to hide the account problem.

They assume the user will:

- reconnect accounts repeatedly

- use a shared "house account"

- or hardcode provider-specific auth inside the automation layer

That is not a stable system for customer-facing publishing.

SocialClaw takes the opposite approach. Customers connect accounts once inside the hosted workspace. Then the same connected resources are available to the dashboard, API, CLI, and agent workflows through that workspace identity.

This makes the publish path much easier to reason about:

- the workspace owns the connected accounts

- the agent owns the content logic

- the execution layer owns the publish state

Validation is the step most AI-agent demos skip

If you only remember one part of this article, it should be this: validation should be the default, not an optional add-on.

Different providers have different rules. SocialClaw keeps those boundaries explicit. A few current examples from the first-party truth set:

- X supports text publishing and scheduled posts, with up to four images or one video

- Instagram publishing requires media

- Discord and Telegram currently support text-only or one image or one video

Those differences are exactly why a separate validation step matters.

In practice, the agent should:

- inspect the connected account

- inspect capabilities and settings

- upload media if required

- validate the schedule

- apply only when validation passes

That staged flow is much safer than asking an agent to improvise provider behavior from memory.

Media handoff is part of the product, not a side detail

Media is where many automation setups get brittle.

If you ask an agent to publish from ad hoc file URLs, the workflow depends on a file host the publishing system does not control. That makes retries, reuse, and long-running schedules harder.

SocialClaw's model is cleaner:

- upload the file once

- get a hosted public URL back

- reuse that URL in schedule files or payloads

That means media handoff is part of the publishing system itself rather than an unrelated external dependency.

Human review still fits inside an AI workflow

"AI automation" does not have to mean blind posting.

SocialClaw's current workflow model already supports validation, campaign preview, apply, and inspection as separate stages. That gives teams room to keep human review where it matters most:

- review the draft

- preview the campaign

- validate the publish shape

- apply only after the payload is acceptable

That is a much better fit for serious publishing than pretending every workflow should be fully autonomous.

A simple multi-channel example

Imagine an agent preparing a product update.

The agent drafts:

- an X post with one image

- a LinkedIn page announcement

- a Discord channel notification

The workflow can run like this:

- The customer connects the X account, LinkedIn page, and Discord webhook once in SocialClaw.

- The agent uploads the launch image through SocialClaw.

- The workflow validates the schedule against the actual connected targets.

- The run is applied only after validation passes.

- The operator inspects the run and post state after execution.

That is the practical value of an AI-agent publishing stack. The agent handles content and orchestration. SocialClaw handles the execution backend.

When this setup is a good fit

This pattern makes the most sense when you are building:

- an embedded SaaS publishing feature

- an internal operator tool

- an OpenClaw-compatible publishing workflow

- a developer-controlled content pipeline that needs real account state and publish inspection

If you only need a lightweight calendar UI for a small team, a generic scheduler may be enough. But if you need a stable execution layer behind an agent, a scheduler alone is usually not enough.

Final takeaway

The hard part of AI-agent social media automation is not getting the model to write a post.

The hard part is making publishing reliable:

- connected accounts

- hosted media

- provider-aware validation

- controlled execution

- inspectable delivery state

That is the job SocialClaw is built to do.

Next steps: